|

12/30/2023 0 Comments Jenkins git webook

At which stage in the process is the time being lost.How long is each event taking to process.Which repositories are sending the most events.There was no correlation to tie events to log statements though, a pull request was hacked together (although never cleaned up for merging) that added a correlation ID that could be used when searching the logs.

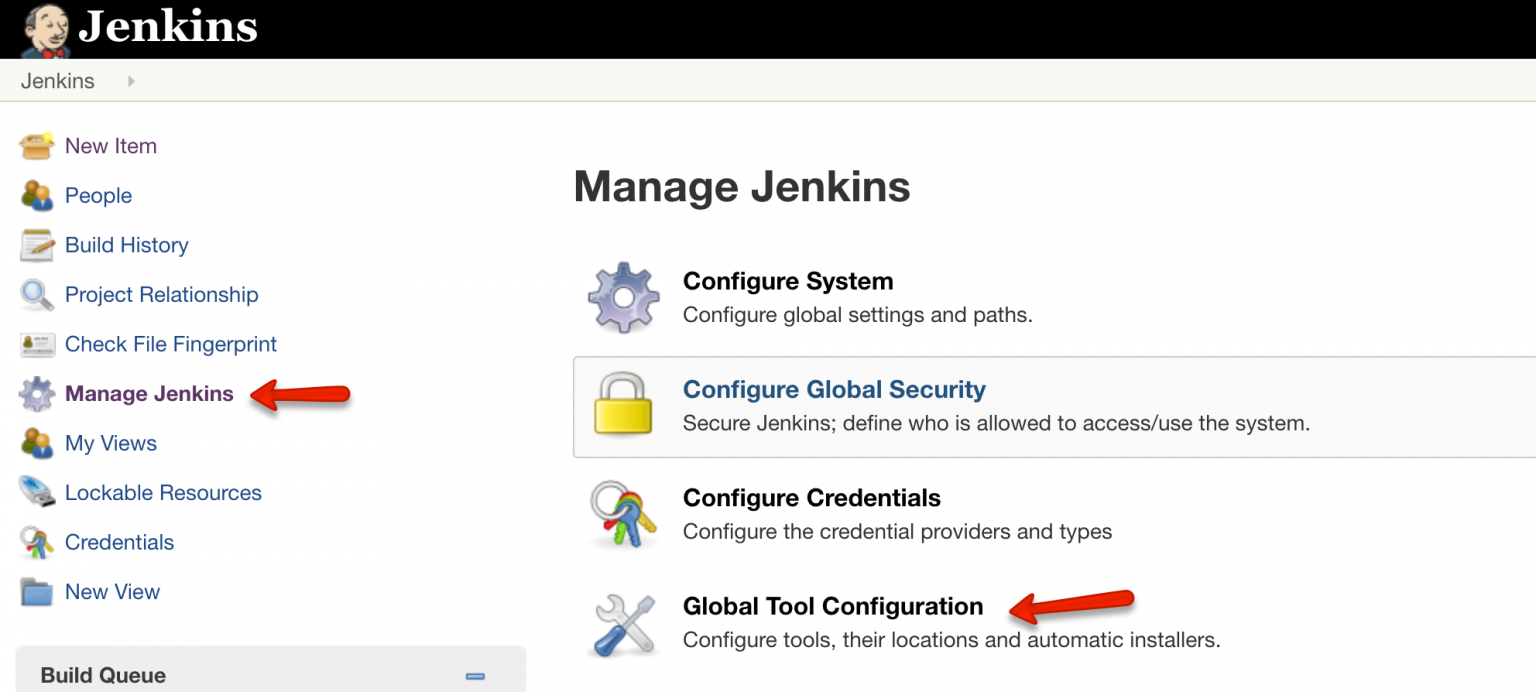

Let's gather some data, JENKINS-68116 Increase log size limit was created to increase the logs. Okay, not fixed but we were in a better position than before. This made a massive difference and initially we thought this may be enough although not a great fix as it was throwing resources at the problem but not fixing the underlying cause.Ĭhecking the queue every now and then was showing much better results, but after a couple of days we started getting reports of the issue reoccurring, and checking the queue at the time showed that there was around 1500 queued. We started with 20 and then went up to 40. The first idea we tried out was changing the thread pool size. One idea came from the issue we opened to use GitHub topics to explicitly opt each repository in rather than using regex filtering. Filter out webhooks from repositories that will never match.Change the logging configuration to retain logs for longer and then do further analysis.There were logs in /var/jenkins_home/logs but they weren't very useful as you couldn't correlate them back to an event and they had an extremely low retention time which gave us around 10-15 minutes of logs. At about this point we created an upstream issue to see if we could get any input from others JENKINS-68116 Slow processing of multi branch events. This shows a very high number of queued tasks, which is likely our issue. Running: .executorService in the Jenkins script console shows: Result: pool size = 10, active threads = 10, queued tasks = 2000, completed tasks = 21888] ThreadPoolExecutor has a very useful toString() method that outputs information on how it's performing. The next step was to try gain some insights into how the thread pool was performing. Hmm that's interesting 10 threads for processing which doesn't scale based on instance size. Looking at SCMHeadEvent.fireLater you'll see the event processing is scheduled with an Executor service which is hardcoded to a maximum of 10. Which then calls SCMHeadEvent.fireLater which is part of the SCM API plugin. Reading through the code you can quickly see that this subscriber just relays the events to another API. We can see one that looks to be the most likely PushGHEventSubscriber. Looking at the implementors of the event subscription extension point. The plugin we are interested in is the GitHub Branch Source plugin which provides an SCM source to Jenkins that we can use for Organisation folders. The above logs are an example of one subscribing to push but not the one we are after. The Jenkins GitHub plugin provides an extension point for subscribing to GitHub events. The next level was Jenkins, by searching for PushEvent in the logs we could see events were arriving fine: 09:18:39.142+0000 INFO o.j.p.g.w.s.DefaultPushGHEventSubscriber#onEvent: Received PushEvent for ****/***** from 140.82.115.93 → 10.10.72.35 → 140.82.115.93 ⇒ 09:18:39.324+0000 INFO o.j.p.g.w.s.DefaultPushGHEventSubscriber#onEvent: Received PushEvent for ****/***** from 140.82.115.96 → 10.10.72.4 → 140.82.115.96 ⇒ Interestingly enough that log line isn't from the event processing we are interested in, time to do some research into how this works.

We have a webhook setup at the GitHub organisation level to send these events to Jenkins. The first place we started looking was are the events being delivered. Lots of scanning of repositories also caused us capacity issues due to more builds being triggered than needed which added more delays to our users. This wasn't good for our users though, they had to remember to manually trigger builds after every push which caused delays to their work. In the mean time users were being asked to workaround it by manually triggering their builds and using the 'Scan repository' functionality in Jenkins to make new pull requests show up in the multi-branch pipelines we use.

We've had issues before when GitHub webhooks weren't being delivered so at first it was just put down to an issue with GitHub. We started receiving reports that our main production Jenkins instance was not triggering jobs when people pushed new commits in GitHub GitHub sends events to Jenkins via webhooks I will show you the troubleshooting process and the learnings along the way of how all this worked. Preventing builds starting automatically from webhooks. In this post I will take you through an issue that plagued us for about 6 weeks.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed